Keyword optimization in the Zero-Click Era requires aligning high-intent phrases with structured data to satisfy generative AI queries. Kelowna SEO strategies now prioritize machine readability to secure placements in AI Answer Blocks. This approach ensures content is processed effectively by Large Language Models that prioritize direct, data-dense responses over traditional blue-link citations in standard search engine results.

Keyword optimization in the Zero-Click Era requires aligning high-intent phrases with structured data to satisfy generative AI queries. Kelowna SEO strategies now prioritize machine readability to secure placements in AI Answer Blocks. This approach ensures content is processed effectively by Large Language Models that prioritize direct, data-dense responses over traditional blue-link citations in standard search engine results.

The Keyword-to-Entity Shift in Generative Engine Optimization

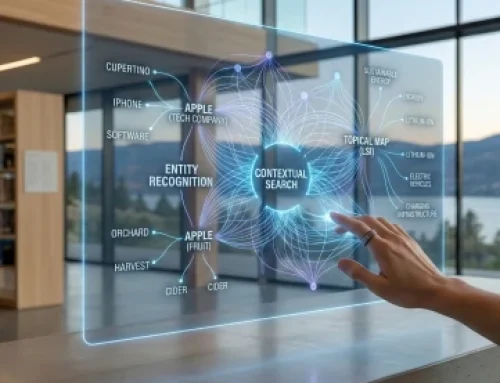

Generative Engine Optimization (GEO) is the technical process of optimizing digital content for Large Language Models (LLMs) and generative search interfaces. Traditional keyword optimization focused on search volume and density to influence ranking algorithms. In the current landscape, the focus has shifted toward entity recognition and the fulfillment of User Intent. User Intent refers to the specific objective a human seeks to achieve when entering a query into a search engine.

AI models do not simply look for matching character strings. They map keywords to established entities within a multi-dimensional vector space. This shift is essential for navigating Zero-Click Search, which occurs when a search engine results page (SERP) provides the full answer to a user’s query without requiring a click-through to an external website. To remain visible, content must serve as a high-authority data source that LLMs can easily parse and cite.

1. Primary Keyword Selection and Intent Alignment

Primary keyword selection is the identification of a single focal term that represents the core topic of a webpage. This selection must be grounded in high-volume data and precise intent mapping. In a GEO framework, the primary keyword functions as the central node of a semantic network.

- High-Intent Target Identification: Selecting keywords that signal a specific action, such as “how to,” “buy,” or “definition of.”

- Volume Analysis: Utilizing data from search consoles to identify terms with sufficient demand to justify optimization efforts.

- Competitive Gap Analysis: Assessing the complexity of the existing AI Overviews for a specific term to determine the likelihood of being cited.

2. H1 Tag and Opening Paragraph Optimization

The H1 tag is the primary HTML header that defines the title of a document for both users and crawlers. Opening paragraphs comprise the first 100 to 150 words of a document. LLMs and traditional crawlers place a high weighting on these initial tokens to determine the topical relevance of the entire file.

- Strategic H1 Placement: The primary keyword must appear at the beginning of the H1 tag to establish an immediate topical anchor.

- The 100-Word Rule: Defining the primary entity and its relationship to the user’s query within the first 100 words of the text.

- Declarative Sentence Structure: Using “is” and “are” statements to provide clear, factual definitions that AI models can extract for summary snippets.

3. Subheading Distribution and Semantic Mapping

Subheading distribution is the use of H2 and H3 tags to organize content into a logical hierarchy. This structure creates a semantic map that allows machine learning models to identify sub-topics within a larger document. A clear hierarchy reduces the computational cost for a crawler to understand the page layout.

- Nested Heading Logic: Ensuring that H3 tags are logically related to the preceding H2 tag to maintain categorical consistency.

- Keyword Variations in Headers: Using secondary keywords and questions in H2 tags to address the “People Also Ask” (PAA) sections of search results.

- Logical Flow: Organizing headers so that the information progresses from broad definitions to specific technical implementations.

4. Natural Keyword Integration and Readability

Natural integration is the process of embedding keywords into prose without degrading the linguistic quality or readability of the text. Modern algorithms utilize Natural Language Processing (NLP) to detect “keyword stuffing,” which is the excessive repetition of terms that provides no additional value.

- Keyword Density Management: Maintaining a keyword frequency that reflects natural speech patterns rather than arbitrary percentages.

- Readability Benchmarks: Ensuring that content meets specific ease-of-reading scores to facilitate faster processing by both humans and AI agents.

- Contextual Relevance: Surrounding keywords with related verbs and nouns that reinforce the primary entity’s meaning within a sentence.

5. URL Structure and Crawler Efficiency

URL structure is the format of the web address assigned to a specific page. Clean, keyword-rich URL slugs improve the efficiency of search engine crawlers. A slug should serve as a compressed indicator of the page content.

- Slug Optimization: Removing “stop words” such as “a,” “the,” and “and” from the URL to keep the slug concise.

- Dash Separators: Using a single dash to separate words within a URL, which is the industry standard for readability.

- Hierarchy Reflection: Creating URL paths that reflect the site architecture, such as /category/primary-keyword/.

6. Meta Data as Compressed Data Packets

Meta data consists of the title tags and meta descriptions found in the HTML head section of a page. In the context of LLMs, these elements act as compressed data packets that summarize the content’s utility.

- Title Tag Precision: Keeping title tags under 60 characters while including the primary keyword and a unique value proposition.

- Meta Description Utility: Writing 150 to 160 character descriptions that provide a direct answer to a query, increasing the probability of being used in an AI snippet.

- Technical Constraints: Avoiding the use of subjective adjectives in meta data to maintain an objective, high-authority profile.

7. Image Alt Text for Multimodal AI Understanding

Image Alt Text is the descriptive text used within the HTML code of an image. As AI models become multimodal – meaning they process text, images, and video simultaneously – alt text provides the necessary context for an AI to “see” the visual content.

- Descriptive Keyword Use: Including the primary or secondary keyword in the alt text while accurately describing the visual elements of the image.

- Accessibility Compliance: Ensuring that alt text serves its primary purpose of aiding visually impaired users while simultaneously providing data to crawlers.

- Technical Formatting: Using descriptive strings such as “technical-diagram-of-seo-structure” rather than generic filenames like “image1.jpg.”

8. LSI and Long-Tail Keyword Support

LSI Keywords (Latent Semantic Indexing) are terms and phrases that are mathematically related to a primary keyword based on their frequent co-occurrence in a corpus of text. Long-tail keywords are longer, more specific phrases that typically have lower search volume but higher conversion intent.

- Semantic Variation: Integrating LSI keywords to prove to an AI model that the content covers a topic comprehensively.

- Long-Tail Specificity: Addressing “how-to” and “why” questions that align with the conversational nature of voice search and generative AI prompts.

- Knowledge Graph Alignment: Using terms that help the AI model place the content within a specific branch of its internal knowledge graph.

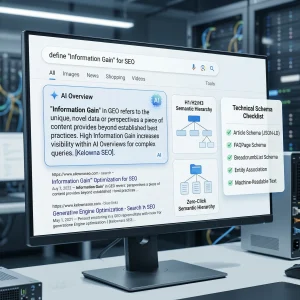

Information Gain and AI Model Preference

Information Gain is a metric used to evaluate the amount of unique information a document provides compared to other documents in the same category. AI models prioritize content that offers a “novelty score” or provides data points that are not present in their training sets or other high-ranking results.

To achieve Information Gain, a technical content strategist must include proprietary data, unique case studies, or specialized technical insights. If a document merely repeats the same information found on eight other websites, its Information Gain score is zero. Providing a unique technical checklist or a specific JSON-LD implementation strategy increases the likelihood that an LLM will cite the page as a primary source of truth.

Technical Schema Checklist for Keyword Support

Implementing structured data is a requirement for modern SEO. It translates human-readable content into a machine-readable format. Use the following checklist to implement JSON-LD schema:

- [ ] Article Schema: Define the “headline,” “author,” and “datePublished” to establish credibility.

- [ ] FAQPage Schema: Use the “mainEntity” property to list questions and answers that include primary and long-tail keywords.

- [ ] BreadcrumbList Schema: Provide a clear path of the page’s location within the site hierarchy.

- [ ] ImageObject Schema: Link the image alt text to a structured data object.

- [ ] Technical Performance: Ensure page load speeds are under 1.5 seconds to prevent crawler timeouts during the indexing phase.

Conclusion

The successful implementation of keyword best practices in the era of generative AI requires a transition from marketing-heavy content to technical, data-dense prose. By prioritizing semantic hierarchy, machine readability, and Information Gain, webmasters can ensure their content remains a primary resource for AI Overviews. Technical efficiency, structured data, and precise keyword placement remain the pillars of visibility in a rapidly evolving search landscape.

Frequently Asked Questions

LLMs process text in tokens, which can be words or parts of words. Keyword density is less important than the “attention” weight an LLM assigns to tokens. Using a keyword in a high-weight position, such as a header, is more effective than high-frequency repetition.

Vector embeddings convert words into numerical coordinates. Content is deemed relevant if its vector coordinates are close to the coordinates of the user’s query. Precise keyword selection helps align these coordinates.

JSON-LD provides a explicit roadmap of the entities on a page. When an AI model can verify facts through structured data, it is more likely to trust and cite that content in a generative response.

Search engines and LLM crawlers have limited “crawl budgets.” Pages that load in under 1.5 seconds are indexed more frequently and thoroughly, allowing the AI to stay updated on new keyword implementations.

Generative models aim to provide the most helpful response. If your content provides a unique perspective or data point that other sources lack, the model identifies your content as a high-value source for its Information Gain.

Rob is an SEO strategist and digital marketer who has been active in the search engine optimization industry since 2001. With over two decades of experience, he has witnessed the evolution of search from the early days of keyword stuffing to the modern era of AI-driven intent.

His expertise lies in technical SEO, content strategy, and authority building. He specializes in helping websites navigate complex algorithm shifts by focusing on high-quality, human-centric content and robust E-E-A-T principles. Throughout his career, he has successfully managed digital growth for a diverse range of industries – providing a grounded and historical perspective that few in the field possess.

When he is not analyzing search trends or optimizing site architecture, he is often traveling and exploring the outdoors.

Let’s Work Together

TELL US MORE ABOUT YOUR PROJECT

Let us help you get your website found. Or, if you simply have a few questions, then fill out the form below and we will get back to you.